Migrating To React + MobX While Shipping New Features

A year ago our front-end was written in a cumbersome combination of Backbone, TypeScript, and a custom state management layer. It was maintainable, but we wanted to ship features faster than it would let us. We wanted to migrate to a React + MobX architecture, but we couldn’t afford to spend six months rewriting most of our codebase at the expense of delivering features.

We cooked up some techniques for doing it piece by piece which have let us build new components in React and lazily migrate existing chunks of interface logic. Over the past eight months, we’ve migrated most of our front-end while shipping big new features in the process, and we’re arriving on a codebase where engineers are excited to contribute.

Don’t do a rewrite

When introducing architectural changes to a complex application that touch the state and view layers, it’s easy to accidentally end up rewriting the whole thing. You start by rewriting one thing, run into a dependency that’s muddying your design, start rewriting that dependency, and repeat. The workflow is attractive because the work feels productive — you’re building things with an attention to detail and quality you couldn’t afford before, and you don’t have to compromise architectural purity for interoperability with “the old code.”

We had the fortune of feeling burned after migrating our front-end to TypeScript. The project ballooned in scope and took longer than we expected, locking up a lot of really cool features with it. So we were cautious when we started thinking about how to improve our architecture to increase feature velocity. We knew we couldn’t fall into the trap of rewriting all functionality before shipping new features again, but it wasn’t clear how to make such a fundamental change piece-by-piece.

With no realistic alternative, we found ourselves facing a new and exciting engineering challenge: how to migrate to our new architecture while operating inside our old architecture. We identified a couple thoughts to guide our decisions while migrating: “Is the API for the thing we’re working on being influenced by the existing architecture in a way that wouldn’t have happened given a rewrite?” and “If everything else around it had been rewritten perfectly, how hard would it be to incorporate this thing into that architecture?” As long as both questions are respected when building and migrating components, engineers can feel confident that the work they’re doing will last outside the transition period (and that the transition has an end).

We found that in order to build consistently according to these guidelines, we needed a toolkit of shims and patterns that ensured we could pave a sustainable path forward.

Rendering to the DOM

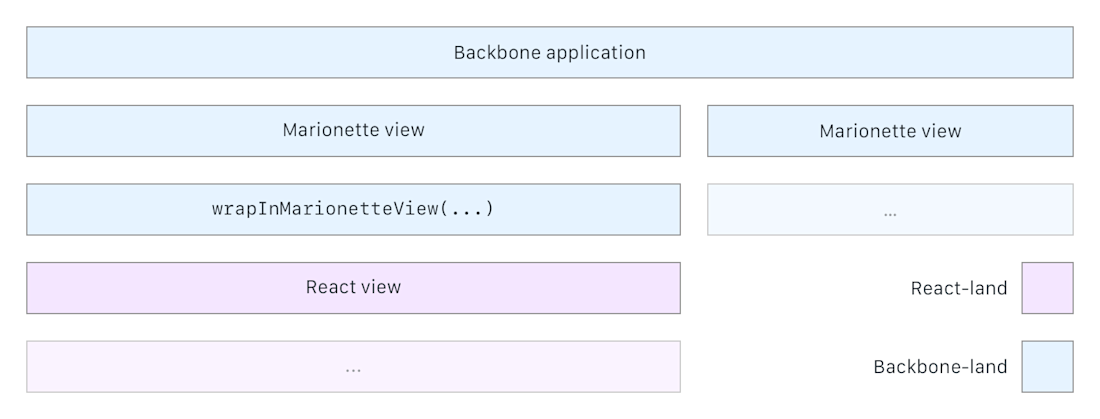

The first thing you want to do after an npm install react react-dom is find some place in your code to call ReactDOM.render. Considering we were already using Marionette to manage the lifecycle of a view, there was a nice opportunity to tie our React components into our view hierarchy in a pretty contained way. We wrote a wrapper that takes a component and returns a Marionette view that manages mounting and unmounting that component in normal Marionette lifecycle hooks.

function wrapInMarionetteView(component: React.ComponentClass | React.SFC, props?: P) { return class extends Marionette.View { get className(): string { return 'react-view'; } onShow() { ReactDOM.render( React.createElement(component, props), this.el ); } onDestroy() { ReactDOM.unmountComponentAtNode(this.el); } }; };

This wrapper has served us pretty well. We can build our React components as if they’re already being rendered in a full React architecture—receiving state through props and all—and we can leave any glue code in the legacy view rendering the component. One small caveat is that Marionette views like to add a div to the DOM as their el property, so where we might want .title-bar > button.save-report, we have .title-bar > .react-view > button.save-report. Considering that React expects full control of its root element anyway, this div is necessary no matter our implementation.

Here’s a diagram of how it’s used inside a view hierarchy:

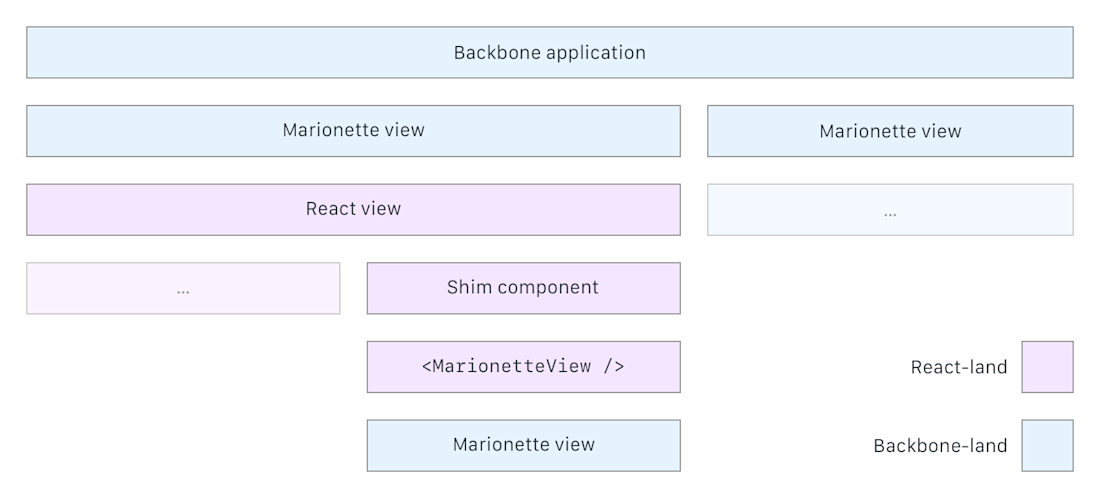

We eventually encountered an area where a piece of the interface was reused in several places in the app and would take more time than we were willing to spend to duplicate its functionality with our new tools. We weren’t ready to commit to rewriting that piece of the interface, but we still wanted to migrate the views that contained it. Maybe you can see where this is going? In the same way that we used Marionette’s lifecycle hooks to create a shim for React components, we decided to use React’s lifecycle hooks to create a shim for Marionette views. This ended up a tad more complicated than our React shim.

interface IMarionetteViewProps { viewFn: () => Marionette.View<Backbone.Model>; regionRef?: (element: HTMLDivElement) => void; } class MarionetteView extends React.Component<IMarionetteViewProps, {}> { id: string; regionManager: Marionette.RegionManager; constructor(props: IMarionetteViewProps) { super(); this.regionManager = new Marionette.RegionManager(); this.id = "marionette-view-" + uuid(); } componentDidMount(): void { this.regionManager.addRegion("entryPoint", "#" + this.id); this.regionManager.get("entryPoint").show(this.props.viewFn()); } componentWillUnmount(): void { this.regionManager.removeRegion("entryPoint"); } shouldComponentUpdate(): boolean { return false; } render(): JSX.Element { return <div id={this.id} ref={this.props.regionRef} />; } }

Marionette exposes its RegionManager responsible for view lifecycle management, so we use it to provide just enough context to render Marionette views like normal without any modification.

It’s a bit hacky, but it works. A component rendering the MarionetteView has to do a little bit of work in the componentDidMount and componentWillUnmount hooks to listen to state changes in the view it wraps. We found that it was useful to encapsulate those hooks inside a separate component responsible for rendering the view.

Put together, these components all look like this in diagram form:

With these two shims, there are a couple places in our code where we’re rendering Marionette views inside React components inside Marionette views. This seems a little crazy at first, but this structure gives us a lot of control to scope out exactly how much work to put into refactoring a given part of the interface without getting sucked into converting the world into React. I want to point out that this isn’t particularly revolutionary or glamorous work, but these shims are foundational to a reasonable migration strategy.

Worrying less about application state

When we started migrating features to React that had to manipulate things that existed in essentially global state, we needed patterns that allowed new components to stay in sync with our old architecture with minimal flimsy glue code. There’s a whole class of bugs around when users change things on one part of an interface and see the previous version of the thing on a separate page. It looks pretty silly and degrades the user’s trust in the application, and we wanted to avoid introducing many of these bugs with changes to how we do state management.

As part of our broader architectural change, we opted to use MobX for state management for its dead simple API and graceful degradation—it doesn’t require any changes to how you access data on your models. MobX works with React by tracking property access and re-rendering components that access those properties in their render function when they change. We can pretty easily spruce up our models as needed with observable properties and use them to make very clean React components. Since MobX doesn’t change type signatures on the models, we can still use them like before in our Backbone views and hook into the right events to keep those in sync when necessary.

We are progressively refactoring our Backbone collections of all of the business objects in the interface—things like reports, event definitions, segment definitions, categories—into their own singleton stores that expose observable properties. The stores allow us to retrieve gold copies of the objects and perform general mutation and persistence operations on them. They usually look something like this (implementation details omitted since they aren’t particularly interesting here):

class ReportStore { reportsById: ObservableMap; @action addReport(report: Report): Promise; @action saveReport(report: Report): Promise; @action deleteReport(report: Report): Promise; const singleton = new ReportStore(); export { singleton as reportStore, ReportStore }; }

For compatibility with Backbone views that don’t perform any manipulations, we use computed properties to create the collections those views expect:

class ReportStore { reportsById: ObservableMap; @computed get asCollectionDeprecated(): Backbone.Collection { return new Backbone.Collection(this.reportsById.values()); } /* ... */ }

This works well enough, but it’s not flawless, especially if the views that refer to the Backbone collection depend on any events it might fire to re-render. Fortunately, we only had one view in our interface that depended on this collection, so we didn’t have to do more work here.

In the cases where the Backbone views do perform heavy manipulations on the resources and potentially handle persistence themselves, we’ve done something a bit weirder. The goal has been to create MobX-ready stores of these resources without having to rewrite large amounts of the tricky logic in old views, so we had to preserve the Backbone collections in some areas. Our solution was to make the map of objects exposed by the store a computed property derived from the original Backbone collection and expose persistence methods that interact with the Backbone collection under the hood:

class EventDefinitionStore { @computed get eventDefinitionsById(): ObservableMap { return observable.map( _(Cache.definedEvents.models) .groupBy((event) => event.id) .mapValues(_.first) .value() ); } @action saveEventDefinition(event: EventDefinition): Promise { return event.save().then( action((event) => { /* Replace event in Backbone collection */ }) ); } }

At a high level, this allows us to build robust, future-ready components off the store without having to interact with the Backbone.Collection to update state.

The trick is that a Backbone.Collection is not observable, so eventDefinitionsById won’t re-compute when an event definition is added to or removed from the Cache.definedEvents collection. We dug a bit into the MobX docs and found a little thing called an Atom, essentially a tool to make things look observable to MobX. If an atom fires reportObserved from inside some computed value or observer, MobX will remember that and re-run the observer when the atom fires reportChanged. Some (but not too much) hackery followed:

class Cache { private definedEventsAtom: mobx.Atom; private realDefinedEvents: Backbone.Collection; get definedEvents(): Backbone.Collection { this.definedEventsAtom.reportObserved(); // <------ Wow, easy! return this.realDefinedEvents; } set definedEvents(val: Backbone.Collection) { this.realDefinedEvents = val; } constructor() { this.definedEventsAtom = new mobx.Atom("Defined Events"); this.definedEvents = new Backbone.Collection(); this.definedEvents.on("add remove", () => { this.definedEventsAtom.reportChanged(); // <------ Nice! }); } } const singleton = new Cache(); export { singleton as Cache };

This works like a charm. Legacy code that interacts heavily with the Cache.definedEvents collection can go about its business, and new development in React can interact with the higher-level store without bending to fit the Backbone collection underneath it.

As you can see, it takes a bit of hacking and shuffling things under the table inside dynamic getters and setters to build an API before the architecture around it fully exists. The work is a bit unsavory, but we’re super excited that it has enabled us to build new functionality within a complex application at speeds comparable to working with a fresh slate.

Stay tuned for our next post where we’ll describe how we’ve been using Storybook to make a suite of presentational components, wrangle errant stylesheets mid-migration, and prepare for browser testing.

If you’ve also spent some time migrating an application in flight, please reach out! I’d love to compare notes. Oh, and by the way, we’re hiring! Come help make it easier for companies big and small to make data-driven decisions with us.